4 Ways Storage Has Improved in Windows Server 2012

With companies facing increasing storage and performance demands as they build private clouds, Microsoft’s Windows Server 2012 introduces new technologies to help reduce costs and improve application response.

Here are four helpful ways in which storage has improved with Windows Server 2012.

1. Server Message Block 3.0

Server Message Block (SMB) is the protocol used in Windows for file and printer sharing across the network. In Windows Server 2012, SMB Direct adds support for network cards that have Remote Direct Memory Access (RDMA). RDMA allows network cards to bypass the Windows Kernel and read and write directly to memory, reducing the load on the file server’s CPU and providing fast, low-latency network throughput. This feature is especially useful for Hyper-V and SQL, as the resulting speeds are similar to local disk input and output.

SMB Direct works with SMB Multichannel to maximize network throughput using TCP/IP receive-side scaling (RSS), better connection resiliency, load balancing and self-adjustments when new network paths are detected. A minimum of two Windows Server 2012 devices with RDMA-compatible network cards is required for SMB Direct.

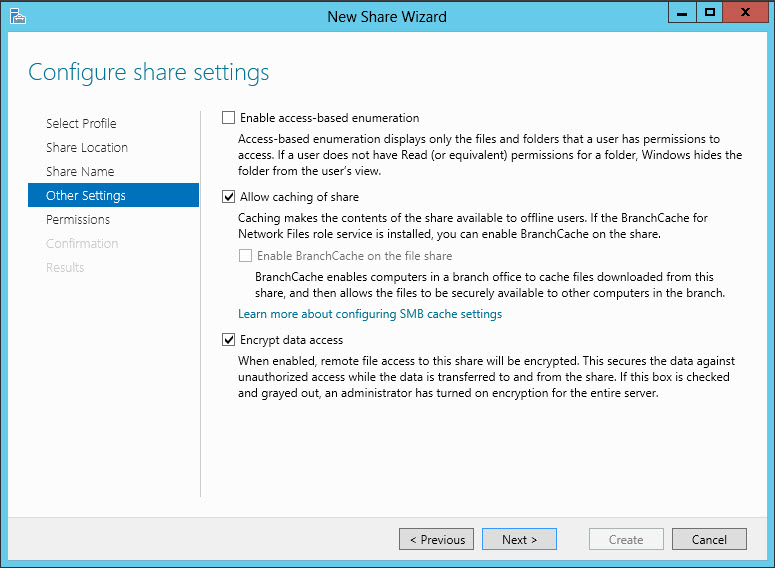

Windows Server 2012 SMB Encryption ensures data is protected over the wire and can be enabled for individual shares. Unlike IPsec, which can also encrypt SMB traffic, SMB Encryption requires no configuration or planning. Previous versions of SMB only signed network packets to prevent spoofing.

Figure 1 – Enabling SMB Encryption for a new share in Windows Server 2012

2. Offloaded Data Transfer (ODX)

If you have an ODX-compatible storage area network (SAN), you can copy large chunks of data between Windows Server 2012 file servers without processing it through the Windows Kernel, which will help reduce the load on your servers.

Microsoft claims that ODX, like SMB Direct, can provide speeds comparable to file operations on local storage. This is especially useful for companies with a virtual desktop infrastructure (VDI), as it should allow Hyper-V to move entire virtual machines between servers with a much lower impact on CPU and reduced network bandwidth.

3. Windows Deduplication

Single Instance Storage (SiS) capability has existed in Windows Server for many years, but because it operates at the file level, it can be inefficient. Data deduplication in Windows Server 2012 works at the disk level, meaning that a small change to a file won’t result in the entire file being stored twice.

Deduplication is enabled as a feature of the File and Storage Services role and is completely transparent to users and applications. While slightly fewer users can be served by a volume where deduplication is enabled – around 10 percent fewer – the deduplication engine passes over files that are being written to, and those files are not deduplicated for a set period of time to ensure there are no performance issues with files in active use.

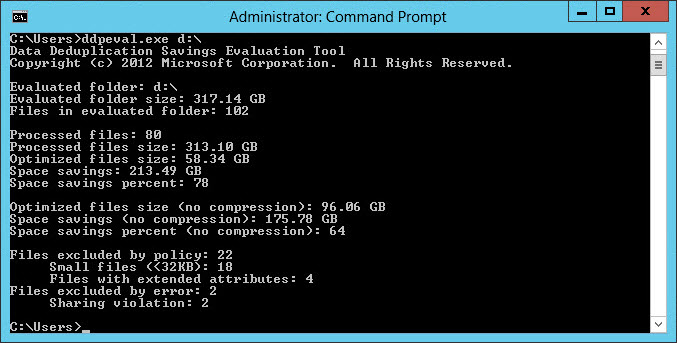

Server 2012 includes the ddpeval.exe command line tool, which estimates the potential space savings on a volume if deduplication were to be enabled. The tool can also be copied over to Windows Server 2008 R2 to provide information for use as part of a business case for upgrading to Server 2012.

Figure 2 – The results of running the ddpeval.exe tool, showing a potential savings of 64 percent.

There are some limitations with deduplication in Windows Server 2012. It can’t be used on system drives or on volumes that aren’t formatted with NTFS or that contain running virtual machines or SQL databases. However, you can exclude certain file types and locations.

4. Storage Virtualization

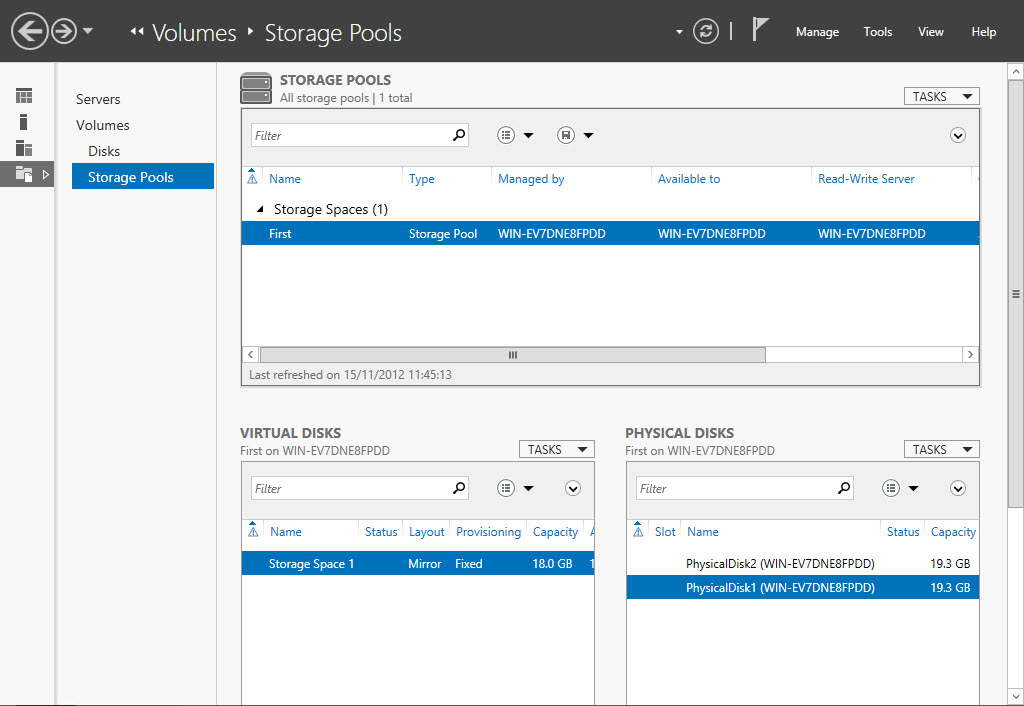

Storage Pools form the basis of new storage virtualization features in Windows Server 2012 and consist of a collection of one or more physical disks that can be added to at any time. Permissions can also be assigned to Storage Pools, which is useful for cloud service providers that want to provide services to different customers on the same hardware, sometimes referred to as a multitenancy server deployment.

A Storage Pool can accommodate one or more Storage Spaces, which appear in File Explorer as a standard drive letter. Storage Spaces are, in fact, virtual hard disks in the new Hyper-V .vhdx format. Thin provisioning allows Storage Spaces to be much larger than the actual physical capacity initially assigned to them. When capacity reaches 70 percent, a warning is sent to add more physical storage. Storage Spaces also work with cluster nodes and can be failed over automatically as required.

The Resilient File System (ReFS) was developed at the same time as Storage Spaces to provide better resiliency to data corruption. ReFS decreases the likelihood of corruption by using background data scrubbing (error checking and correction) and protects data in the case of a power failure or cluster failover.

When creating a Storage Space, you can specify RAID mirroring or parity for resiliency, and the underlying technology allows different types and sizes of drive to be used, something that’s not usually possible with hardware RAID solutions. However, the flexibility of Storage Spaces can backfire. For example, there’s nothing to stop an IT worker from creating a RAID array using several disks connected to a single USB bus.

Figure 3 – A new Storage Space in Windows Server 2012

Anyone expecting this type of configuration to perform like hardware RAID using fixed SATA drives is likely to be disappointed. Also, bear in mind that RAID parity — where an extra bit is written to disk so data can be restored in the case of a drive failure — is intended for volumes that are mainly read-only, and only occasionally written to.

Adding Up to a Storage ROI

The storage virtualization, resilience and protocol changes in Windows Server 2012 can significantly improve performance and reduce storage costs. Although there are some limitations with the new technologies in their current form, Windows Deduplication has the potential to provide a good return on your investment in Windows Server 2012 by minimizing storage growth.

Additionally, smaller companies will find the RAID mirroring feature in Storage Spaces — and the ability to add additional drives on the fly — make it easier to manage storage without investing in costly hardware solutions.