Is Your Business Ready for Image Cognition?

In March, U.S. footwear retailer Shoe Carnival announced its foray into visual search.

Forget browsing by keyword and check boxes. Simply snap a picture of the shoe you like and, fueled by a back-end, 3D visual search engine from Toronto-based Slyce, the shoe retailer instantly returns the closest possible matches from thousands of offerings.

The concept of search has made the leap from bar and QR codes to scanning the physical world. The technology seems to have reached its tipping point and now stands poised to help remold the search experience.

Jamie Tan, MIT-trained physicist and now CEO of the visual search company Imaginestics, explains that, in the early 2000s, visual search at Google and other companies entailed hiring humans to examine images and videos, recognize their contents and tag the files with the appropriate descriptors — “hat,” “shoes,” “bag,” etc. Users who typed a keyword would receive media matching that word. But the approach is only accurate to the category level.

The Growth of Artificial Intelligence

A few years ago, aggressive advances in artificial intelligence and machine-driven — or so-called “deep learning” — algorithms, coupled with the rise of extremely fast parallel computation in graphics processors, enabled massive leaps in image searching.

Suddenly, machines could take over the role of tagging by recognizing objects based on a much fuzzier set of inputs. Recognition alone, however, is not the endgame.

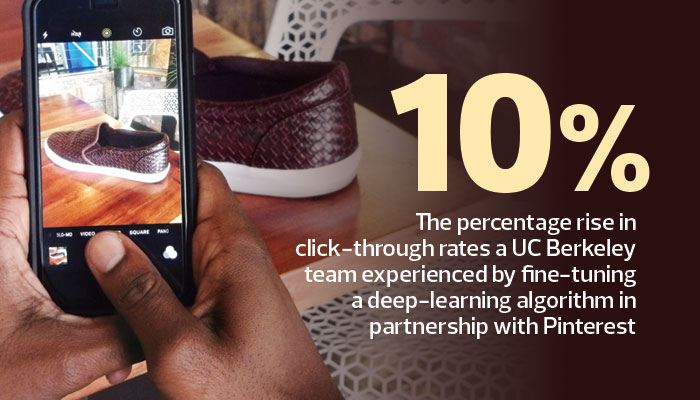

SOURCE: University of California, Berkeley and Pinterest, “Visual Search at Pinterest,” August 2015

“What is a user’s intent with an image, say, of a shoe?” asks Tan. “Is it to find an exact match or a similar match in different styles?

In retail, the user often wants a recommendation engine. We look for an exact match. If you have a broken part on an aircraft maintenance line, you need that exact part. We’re doing matching based on object geometries inside the pictures.”

This sort of exact shape-based matching carries well into related fields, such as 3D drawings in computer-aided design and so-called Industry 4.0.

“We are moving toward that revolution where you manufacture only in small quantities, but you customize the part,” Tan adds. “You need a technology that lets you change or reuse your design to make small changes. That is very important in the engineering domain right now. We will be able to find 3D models based on user input. It could be an image, another 3D model, or even something you sketched on a napkin.”