Fluid, Dynamic Data Is Essential for AI, but Complicates Storage Needs

When people think about AI, they often think about the massive sets of training data needed to bring a model up. But data preparation, which includes data extraction, data enrichment, classification, embedding, indexing and semantic search, is resource intensive and requires high-performance storage infrastructure.

“Whether you're training a model, you're fine-tuning a model or you're retrieving context that the model doesn't know about to inform its decisions, all of those things require access — fast access — to accurate, recent data,” said Jacob Liberman, director of enterprise product for NVIDIA, while speaking during his session “Accelerating the Path to Production: The Evolution of Enterprise Storage to Deliver AI-Ready Data” at NVIDIA GTC 2026.

Prior to the AI era, the hottest conversations in data and storage were around amassing Big Data and pooling that data into large storage systems for retrieval. These were called data lakes. But Liberman believes AI is shifting the analogy away from data lakes into something with a lot more movement.

“The data is not only complex, but it's growing all the time. You know, people talk about data as a lake, but it's more like a river,” said Liberman. “It's flowing, it's changing — it's constantly changing. So, the first time that you prepare your data is not going to be the last time. You have to continuously prepare your data for AI as it changes.”

Part of the reason for this shift is that with AI, enterprises are dealing with unstructured data in ways that they haven’t before.

“The data itself is multimodal and heterogeneous. You're not just dealing with one type of data,” said Liberman. “Traditional enterprise applications have siloed data sources, specific data for each application, but an AI agent doesn't — isn't constrained by those boundaries. It needs to tap into all the data sources in order to make a good decision.”

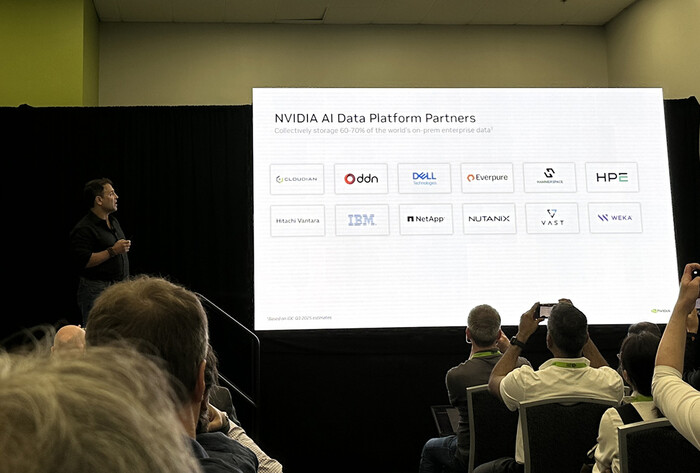

To make storage technology that is more suited to agentic AI, NVIDIA is working with storage industry leaders such as IBM, Dell and NetApp to make this needed AI-ready storage infrastructure a reality, accounting for 60%-70% of the world’s on-premises enterprise data.